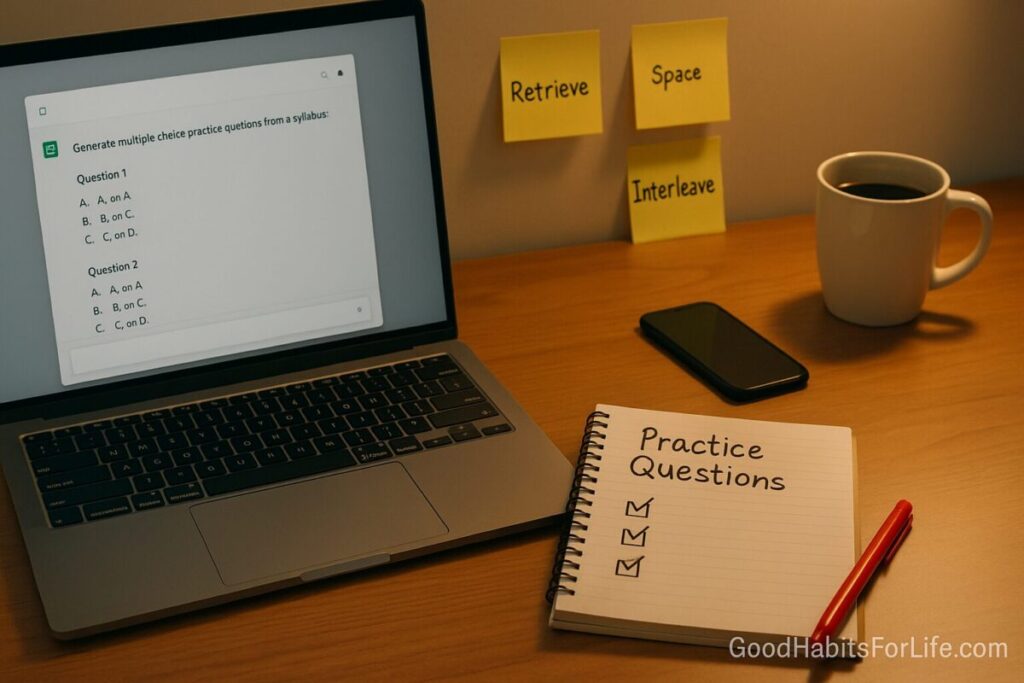

Generate Practice Questions (and Check Them)

Generate Practice Questions with AI (Then Check)

Table of Contents

🧭 What & Why

Definition. AI-assisted practice questions are study items (MCQs, short answers, scenarios) drafted by a language model from your syllabus, then verified and refined by you before use.

Why it works. Learning improves when you retrieve information (testing effect), study in spaced sessions, and interleave topics—not when you just reread notes. Retrieval practice reliably strengthens memory; spacing and interleaving reduce forgetting and improve transfer. PubMed+2PubMed+2

Feedback matters. Specific, timely formative feedback (even self-generated via answer keys/explanations) accelerates learning; meta-analyses find feedback has one of the largest impacts on achievement when it targets task/process and guides next steps. PMC+1

Embrace productive struggle. Trying, erring, and then receiving instruction can deepen understanding (“productive failure”), especially on complex problems—use AI to stage that struggle safely. PubMed+1

Use AI responsibly. Follow education-sector guidance: be transparent, protect privacy, and verify outputs—AI can err. UNESCO+1

✅ Quick Start (Do This Today)

-

Pick a narrow objective (30–60 min).

Example: “Balance redox reactions in acidic solution” or “Explain the difference between classical vs. operant conditioning.” -

Paste clean inputs.

Share only non-sensitive text: topic, key terms, page ranges/lecture titles. (Avoid personal data.) OECD AI -

Generate a mixed set (10–15 items).

Ask for 40% MCQ, 40% short answer, 20% mini-scenarios; request answers + brief explanations, and difficulty from easy→hard. -

Self-test first (closed book).

Timebox: 15–20 min. Mark answers but hide explanations until you commit. -

Verify before you trust.

Check a sample (start with 30%) against your textbook/lecture slides and a reputable source; correct or discard weak items. Scale down to 10–15% spot-checks once accuracy proves stable. SAGE Journals -

Schedule spaced reviews.

Revisit in 1 day, 1 week, 3 weeks; interleave with adjacent topics. laplab.ucsd.edu

🗓️ Habit Plan — 30-60-90 Roadmap

Days 1–30 (Build the engine).

-

Create 3 topic banks/week (≈30–45 items each).

-

Apply the Question Quality Rubric (below) to the first 10 items per bank.

-

Run spaced reviews at 1d/7d/21d intervals. laplab.ucsd.edu

Days 31–60 (Refine & interleave).

-

Interleave old + new topics in every session.

-

Add error logs: for each miss, write a one-sentence “why” and a corrected micro-card. Wiley Online Library

-

Trim low-quality items; keep only those with clear learning value.

Days 61–90 (Scale & specialize).

-

Expand formats (case-based, data sets, practical calculations).

-

Shift from 30% verification to 10–15% random spot-checks if accuracy remains high.

-

Share your bank with a peer for blind testing and feedback. PMC

🧠 Techniques & Frameworks (Evidence-Aligned)

-

Retrieval Practice: Frequent low-stakes tests beat rereading for long-term retention. PubMed

-

Spacing: Review after increasing intervals (e.g., 1–7–21 days) to counter forgetting. PubMed

-

Interleaving: Mix problem types/chapters to improve discrimination and transfer. Wiley Online Library

-

Formative Feedback: Give task-focused, actionable notes (what to fix/how), not just scores. SAGE Journals

-

Productive Failure: Let yourself attempt challenging items before seeing worked solutions. PubMed

-

Integrity & Privacy Guardrails: Keep a human in the loop; don’t paste sensitive data; cite sources; declare AI use if your course requires it. UNESCO

🛠️ Building & Checking Your Question Bank

The 10-Point Question Quality Rubric (score 0–2 per line; aim ≥15/20)

-

Accuracy: Facts, formulas, and references are correct.

-

Alignment: Matches the learning objective and syllabus language.

-

Cognitive Level: Uses the intended Bloom level (recall → analyze → create).

-

Clarity: Clear stem; unambiguous phrasing; no double negatives.

-

Plausible Distractors (MCQ): Wrong options reflect common misconceptions.

-

Explanation Quality: Concise reasoning/steps for the correct answer.

-

Bias & Fairness: No stereotypes; accessible wording.

-

Originality: Not a verbatim copy of textbook questions.

-

Difficulty Curve: Mix of easy/medium/hard; label difficulty.

-

Source Traceability: Cite page/slide/section used to verify.

Verification Workflow (fast)

-

Stage 1 — Sampling: Check the first 30% of items line-by-line against your textbook/lecture notes + one reputable source (e.g., university site, peer-reviewed article). Reduce to 10–15% random spot-checks after 3 clean batches. SAGE Journals

-

Stage 2 — Feedback pass: Revise explanations for any misses; add why-it’s-wrong notes to each distractor (MCQs). Feedback is most effective when specific and task-focused. SAGE Journals

-

Stage 3 — Spaced/Interleaved scheduling: Slot items into your 1–7–21 day cycle and mix neighboring topics. PubMed+1

👥 Audience Variations

-

Students (school/college): Keep banks small (≤40 per topic). Add “exam-style” sections matching your past papers.

-

Parents: Use simple language, 3-option MCQs, and read-aloud mode for younger learners.

-

Professionals: Focus on scenarios, decision trees, and regulation citations.

-

Seniors/Returning Learners: Favor short sessions (20–25 min) with generous explanations and larger fonts; extend spacing to 2–14–30 days. PubMed

-

Teens: Gamify with streaks and timed quizzes; add myth-busting distractors that reflect common misconceptions.

⚠️ Mistakes & Myths to Avoid

-

Myth: “AI questions are automatically correct.” Models can be confidently wrong; always verify. UNESCO

-

Mistake: Only MCQs. Include short answers and scenarios to reach higher Bloom levels.

-

Myth: “More items = more learning.” Better to prune aggressively and schedule spaced reviews. PubMed

-

Mistake: Vague feedback. Replace “revise Chapter 3” with a one-line fix (“confused ANOVA vs. ANCOVA—review assumptions”). SAGE Journals

🗣️ Real-Life Examples & Copy-Paste Prompts

1) Prompt to Generate the First Batch

You are a tutor. Create 15 practice questions on [topic] from [pages/slides] for [course/level]. Mix: 6 MCQ, 6 short-answer, 3 scenario items. Label difficulty (easy/med/hard). Provide correct answers and 1–3 sentence explanations. Vary contexts and include common misconceptions in distractors. Align with these objectives: [paste objectives]. Do not invent facts beyond the sources; if uncertain, say “needs source.”

2) Prompt to Tighten Explanations

Improve each explanation to be specific and step-wise (max 70 words). Highlight the key step that often causes errors. If numerical, show working. Add a one-line hint for reattempt.

3) Prompt for Interleaving

Blend 10 items from Topic A with 10 from Topic B. Ensure alternating concepts and include 4 comparison questions that force discrimination.

4) Verification Checklist (paste into notes)

-

Factually correct vs. textbook/page ___

-

Objective match (___)

-

Level match (Bloom: ___)

-

Clear stem & options

-

Explanation fixes misconception ___

-

No bias/ambiguous wording

5) Error Log Template

Missed Q#: Concept: ___. Why I missed it: ___. Fix in 1 sentence: ___. New micro-card: _. Next review: // (1–7–21).

📚 Tools, Apps & Resources (quick picks)

-

Spaced-repetition: Anki, RemNote, Mnemosyne (SRS scheduling; tags; LaTeX).

-

Open question banks: OpenStax (peer-reviewed textbooks), Khan Academy (mastery questions).

-

Note/Quiz builders: Obsidian (Flashcards/Spaced Repetition plugins), Notion databases for item tracking.

-

Verification sources (examples): University sites (.edu), PubMed/PMC for biomedical, government standards. (Prefer primary/peer-reviewed or recognized institutional pages for checking.) PMC

Pros/cons:

-

SRS tools = strong spacing but require setup;

-

OpenStax/Khan = trusted foundations but may not match your syllabus precisely;

-

Notes apps = flexible; build your own taxonomy; needs discipline.

🔑 Key Takeaways

-

Retrieval + spacing + interleaving are the core; AI is your item generator, not the final authority. PubMed+2PubMed+2

-

Always verify (sample → revise → spot-check).

-

Feedback should be specific and actionable to accelerate improvement. SAGE Journals

-

Follow integrity/privacy guidance from recognized bodies; document your sources. UNESCO

❓ FAQs

1) Is it okay to use AI for practice questions?

Yes—if your course permits AI for studying and you verify the items. Be transparent and avoid pasting sensitive information. UNESCO

2) How many items per topic are ideal?

Start with 30–45 and prune to the best ~25. Quality beats quantity; schedule spaced reviews. PubMed

3) What mix of formats works best?

Blend MCQ, short answer, and scenarios; interleave topics to improve transfer. Wiley Online Library

4) Can I trust AI explanations?

Treat them as drafts. Check against textbooks/lecture slides or reputable sources; edit for clarity. SAGE Journals

5) How often should I review?

A simple 1–7–21 day schedule works well; adjust to your exam date and difficulty. laplab.ucsd.edu

6) How do I give myself good feedback?

Use the rubric, write why you missed an item, and add a micro-card to fix the misconception. Targeted, timely feedback is what helps. SAGE Journals

7) What about making mistakes on purpose?

Attempting hard problems before instruction (with later correction) can deepen learning on complex tasks. Use judiciously. PubMed

8) Do I need citations in my study notes?

For factual subjects, yes—note the page or source used to verify each item. This improves traceability and trust. SAGE Journals

📖 References

-

Roediger, H. L., & Karpicke, J. D. (2006). Test-Enhanced Learning. Psychological Science. https://doi.org/10.1111/j.1467-9280.2006.01693.x SAGE Journals

-

Dunlosky, J., et al. (2013). Improving Students’ Learning With Effective Learning Techniques. Psychological Science in the Public Interest. https://doi.org/10.1177/1529100612453266 SAGE Journals

-

Cepeda, N. J., et al. (2006). Distributed practice in verbal recall tasks: A review. Psychonomic Bulletin & Review. https://pubmed.ncbi.nlm.nih.gov/16719566/ PubMed

-

Cepeda, N. J., et al. (2008). Spacing Effects in Learning. Psychological Science. (PDF) https://laplab.ucsd.edu/articles/Cepeda%20et%202008_psychsci.pdf laplab.ucsd.edu

-

Rohrer, D., & Taylor, K. (2007/2010). Interleaving practice improves learning. Applied Cognitive Psychology. https://onlinelibrary.wiley.com/doi/10.1002/acp.1598 Wiley Online Library

-

Hattie, J., & Timperley, H. (2007). The Power of Feedback. Review of Educational Research. (PDF) https://conselhopedagogico.tecnico.ulisboa.pt/files/sites/32/hattie-and-timperley-2007.pdf conselhopedagogico.tecnico.ulisboa.pt

-

Shute, V. J. (2008). Focus on Formative Feedback. Review of Educational Research. https://journals.sagepub.com/doi/10.3102/0034654307313795 SAGE Journals

-

Kapur, M. (2014). Productive failure in learning math. Cognitive Science. https://pubmed.ncbi.nlm.nih.gov/24628487/ PubMed

-

UNESCO (2023, updated 2025). Guidance for Generative AI in Education and Research. https://www.unesco.org/en/articles/guidance-generative-ai-education-and-research UNESCO

-

OECD.AI (2019/2024). OECD AI Principles. https://oecd.ai/en/ai-principles OECD AI