Fact-Checking AI: Citations, Sources, and Sanity Checks

Fact-Checking AI: Citations, Sources, and Sanity Checks

Table of Contents

🧭 What & Why

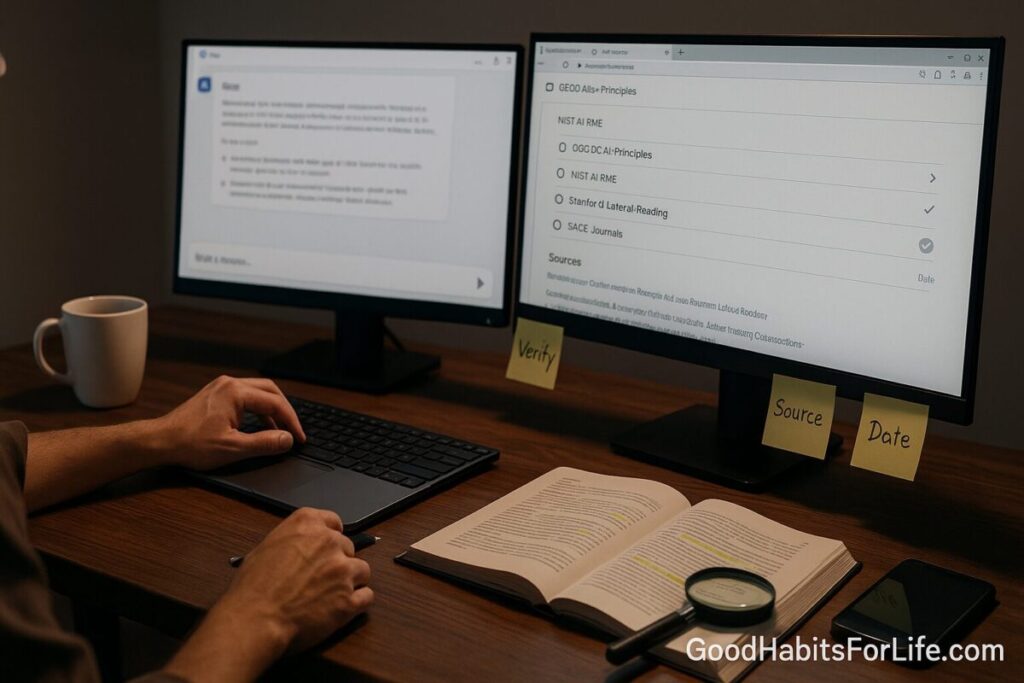

AI is excellent at drafting but not inherently authoritative. Models generate language that sounds right; without external grounding they may “hallucinate” facts or citations. Responsible AI use means verifying outputs before publishing, especially for education, health, and policy topics. NIST’s AI Risk Management Framework and the OECD AI Principles both emphasize trustworthiness and the need for human oversight and provenance. OECD AI+3NIST Publications+3NIST+3

Research on digital literacy shows that experts don’t just read a page vertically; they read laterally—opening new tabs to investigate the source before trusting the claim. This habit consistently outperforms on-site checklists for judging credibility and is foundational when vetting AI outputs. stacks.stanford.edu+2SAGE Journals+2

✅ Quick Start (Do This Today)

-

Pin the claim. Copy the exact sentence/number the AI produced.

-

Ask for sources. If your tool doesn’t cite, prompt: “Cite primary sources with working links (DOIs if possible).”

-

Open three tabs: the source itself, a separate search about the source, and a search for independent coverage of the same claim. (This is lateral reading.) stacks.stanford.edu

-

Prefer primary. Look for the original paper, official statistics page, law, dataset, or standard.

-

Check authority & accountability. Who published it? Are there corrections, conflicts, or clear methods? IFCN, NIST, OECD, major newsrooms or journals score well on transparency. ifcncodeofprinciples.poynter.org+2NIST Publications+2

-

Run sanity checks. Verify dates, units, denominators, sample sizes, and whether the quote actually appears in the source.

-

Capture a durable link. Save the DOI/permalink; also archive the page for future reference with the Wayback Machine. help.archive.org

-

Log it. Keep a short citation note in your CMS (title, URL/DOI, date accessed, archive link).

🛠️ Techniques & Frameworks (SIFT, Lateral Reading)

SIFT (Mike Caulfield):

-

Stop (recognize the need to pause).

-

Investigate the source (who’s behind it?).

-

Find better coverage (independent confirmation).

-

Trace claims to the original context (primary source).

Use SIFT when reviewing AI outputs—especially when they cite blogs or reprints rather than the origin. guides.lib.uchicago.edu+2Pressbooks+2

Lateral Reading:

Open new tabs to evaluate the site itself and find other reporting before you read the claim in depth. This mirrors how professional fact-checkers work and helps you avoid getting trapped by a single page’s design or tone. stacks.stanford.edu+1

📚 Citations That Stick (Primary, DOIs, Archiving)

-

Primary over secondary. Prefer the original paper, dataset, statute, or standard. (Secondary explainers are useful but shouldn’t be your only source.)

-

DOIs & permalinks. When available, store a DOI (journal articles) or a canonical/permalink (newsrooms, standards bodies).

-

Archive for durability. Use the Wayback Machine “Save Page Now” or its extension to generate a permanent snapshot—handy if links change. help.archive.org+1

-

Source transparency. For news claims, check whether the newsroom follows an accountability code (e.g., IFCN signatories for dedicated fact-checkers). ifcncodeofprinciples.poynter.org

-

Domain ownership. For suspicious sites, run a WHOIS lookup via ICANN Lookup to see registration data and spot red flags. ICANN Lookup+1

Mini-checklist for each citation:

-

Does the linked page actually support the quoted claim?

-

Is the date current enough for the topic?

-

Is there a better primary source?

-

Did you archive it and log a note?

🧠 Grounded AI: Retrieval & Source-Aware Workflows

Purely generative systems can invent details. One remedy is retrieval-augmented generation (RAG)—the model searches a vetted corpus and generates with citations from retrieved documents. RAG reduces factual drift and improves traceability, especially when your corpus is your own knowledge base or trusted publishers. arXiv+1

For research-heavy work, choose AI tools that:

-

Show sources inline (links or footnotes).

-

Allow custom corpora (your PDFs, policies, datasets).

-

Support “open in source” (jump from claim to document).

-

Log provenance (URLs/DOIs, timestamps, hash or archive links).

Recent surveys and industry coverage highlight RAG’s role in reducing hallucinations and making outputs verifiable—particularly as major vendors adopt source-citing features. arXiv+1

👥 Audience Variations

Students & Teens

-

Learn SIFT + lateral reading as default. Avoid citing AI; cite the sources it helped you find. Pressbooks+1

Professionals

-

Store DOIs/permalinks in a shared library; standardize an archive-on-save workflow (Wayback Machine). help.archive.org

Educators & Parents

-

Teach “open two tabs for the source, one for the source about the source.” Model it live in class. SAGE Journals

Editors/Publishers

-

Require claims review for numbers, health/legal statements, and AI-generated passages; align with NIST/OECD trustworthiness themes. NIST Publications+1

⚠️ Mistakes & Myths to Avoid

-

Myth: “If AI gave a link, it’s true.” Reality: links can be outdated, misinterpreted, or invented; always open and read.

-

Mistake: Citing aggregator posts instead of the original study.

-

Mistake: Confusing press releases with peer-reviewed evidence.

-

Myth: “Wikipedia is banned.” Reality: It’s fine as a map to primary sources—use it to find DOIs and references, not as your only citation.

-

Mistake: Not archiving volatile pages (e.g., policy docs that change). Use Save Page Now. help.archive.org

💬 Real-Life Examples & Scripts

Example 1 — The suspicious statistic

-

AI output: “40% of adults do X each day.”

-

Script: “Show the primary source with a working link and publication year.” → Open the link → Verify publisher (gov/university/journal) → Check sample size/date → Archive it → Log DOI.

Example 2 — Viral image

-

AI output: “This photo shows event Y in 2010.”

-

Script: “Provide authoritative reverse-image results and original publication.” → Use Google’s guidance and Fact Check Explorer to see prior debunks → Verify EXIF/context if available → Archive. blog.google+1

Example 3 — Unknown website

-

AI output: “Source: example-news.net.”

-

Script: WHOIS the domain (ICANN Lookup) → Check “About/Staff/Corrections” → Search for independent coverage of the same claim → Decide whether to trust or ignore. ICANN Lookup

🧰 Tools, Apps & Resources (Pros/Cons)

-

Google Fact Check Explorer — Finds existing fact checks (ClaimReview). Pro: fast aggregation. Con: Coverage varies by language/topic. Google Toolbox+1

-

Wayback Machine — Permanent snapshots (Save Page Now) and history of pages. Pro: combats link rot. Con: Some sites block or are temporarily limited. help.archive.org+1

-

ICANN Lookup (WHOIS) — Domain registration data. Pro: Reveals ownership clues. Con: Privacy proxies can mask details. ICANN Lookup

-

Google’s search features for fact-checking — Helpful “About this result,” reverse image, and context tips. Pro: Built-in, quick. Con: Still requires judgment. blog.google

-

Crossref/Google Scholar/Publisher sites — DOIs, canonical versions. Pro: Authoritative links. Con: Paywalls (use abstracts/cite official pages when needed).

🗺️ Habit Plan (7-Day Starter)

Day 1: Install a citation workflow—notes field in your CMS with: Claim → Source → DOI/permalink → Archive URL → Date accessed.

Day 2: Practice SIFT on three AI-generated claims; log your results. guides.lib.uchicago.edu

Day 3: Build a lateral reading routine (open 3 tabs) for any new site you cite. stacks.stanford.edu

Day 4: Adopt a “primary first” rule and update two existing posts with original sources and archived links. help.archive.org

Day 5: Choose an AI setup that displays sources or uses retrieval over your trusted library; test it on one article. arXiv

Day 6: Create a Sanity Checks checklist (dates, denominators, conflicts, exact quotes).

Day 7: Host a 30-minute team review; compare two examples where verification changed your conclusion.

🧩 Key Takeaways

-

Treat AI like a fast research assistant that needs supervision.

-

Use SIFT and lateral reading to investigate sources first. guides.lib.uchicago.edu+1

-

Prefer primary sources with DOIs/permalinks, and archive them for durability. help.archive.org

-

Favor retrieval-grounded AI that shows citations and lets you open the underlying documents. NeurIPS Proceedings

❓ FAQs

1) Can I cite AI (ChatGPT, etc.) as a source?

Cite the sources AI helps you find, not the AI itself. If you must acknowledge AI assistance, do so transparently in editorial notes or methodology.

2) What if the AI’s link is broken or the content changed?

Search for the DOI or canonical page and create a Wayback snapshot. Update your post with the stable link and archive URL. help.archive.org

3) Is Wikipedia acceptable?

Use it as a starting map to find primary sources and DOIs—do not rely on it as your only citation.

4) How do I verify an image the AI referenced?

Use reverse image search and look up existing debunks in Fact Check Explorer; check dates, locations, and context. blog.google+1

5) What does “lateral reading” actually look like?

Open tabs to investigate the publisher and find independent reporting before trusting the content you’re reading. stacks.stanford.edu

6) How does RAG help with citations?

RAG pulls from a vetted corpus and can surface links/footnotes, reducing hallucinations and improving traceability. arXiv

7) How do I spot a sketchy website the AI quoted?

Check domain registration (ICANN Lookup), look for transparent “About/Corrections/Funding,” and seek better coverage. ICANN Lookup

8) Are there standards for trustworthy AI use?

Yes—see NIST’s AI RMF and the OECD AI Principles, which stress reliability, transparency, and accountability. NIST Publications+1

📚 References

-

NIST. AI Risk Management Framework (AI RMF 1.0). 2023. https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf NIST Publications

-

OECD. OECD AI Principles. https://oecd.ai/en/ai-principles OECD AI

-

Wineburg, S., & McGrew, S. Lateral Reading and the Nature of Expertise. Stanford History Education Group. https://stacks.stanford.edu/file/druid:yk133ht8603/… stacks.stanford.edu

-

Wineburg, S., et al. “Reading Less and Learning More…” Teachers College Record (2019). https://journals.sagepub.com/doi/10.1177/016146811912101102 SAGE Journals

-

Caulfield, M. SIFT / Four Moves (guides and summary). https://pressbooks.pub/webliteracy/chapter/four-strategies/ Pressbooks

-

University of Chicago Library. “The SIFT Method.” https://guides.lib.uchicago.edu/c.php?g=1241077&p=9082322 guides.lib.uchicago.edu

-

Lewis, P. et al. “Retrieval-Augmented Generation for Knowledge-Intensive NLP.” NeurIPS 2020. https://proceedings.neurips.cc/paper/2020/file/6b493230205f780e1bc26945df7481e5-Paper.pdf NeurIPS Proceedings

-

Ye, F. et al. “Retrieval-Augmented Generation: A Comprehensive Survey.” 2024. https://arxiv.org/pdf/2406.13249 arXiv

-

Google News Initiative. “Google Fact Check Tools.” https://newsinitiative.withgoogle.com/resources/trainings/google-fact-check-tools/ newsinitiative.withgoogle.com

-

Internet Archive Help. “Using the Wayback Machine / Save Page Now.” https://help.archive.org/help/using-the-wayback-machine/ help.archive.org

-

ICANN. “ICANN Lookup / RDAP update.” https://lookup.icann.org/ and https://www.icann.org/en/blogs/details/updated-lookup-tool-… ICANN Lookup+1

-

Poynter, International Fact-Checking Network. Code of Principles. https://ifcncodeofprinciples.poynter.org/ ifcncodeofprinciples.poynter.org